Posts

Showing posts from March, 2014

What is the Best way to differentiate between ccTLD’s

- Get link

- X

- Other Apps

How to judge that with which algorithm your website been hit or penalized

- Get link

- X

- Other Apps

Can sites do well without using spammy techniques?

- Get link

- X

- Other Apps

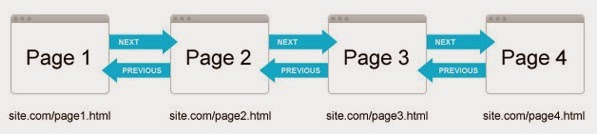

Conquering Pagination – A Guide to Consolidating your Content

- Get link

- X

- Other Apps

What FACEBOOK and GOOGLE are Hiding from world.

- Get link

- X

- Other Apps

Can I place multiple breadcrumbs on a page?

- Get link

- X

- Other Apps

SEO copywriting tips How to write a top converting about us page

- Get link

- X

- Other Apps

What should sites do with pages for products that are no longer available?

- Get link

- X

- Other Apps

Is there a way to tell Google about a mobile version of a page?

- Get link

- X

- Other Apps

How content should be designed to get rank higher?

- Get link

- X

- Other Apps

What is a "Paid Link" - From Matt Cutts

- Get link

- X

- Other Apps